Customers have often asked me what I think of “Entity-attribute-value” (EAV) design. So I thought it would be a good idea to lay down my opinion in writing.

What is entity-attribute-value design?

Customers have often asked me what I think of “Entity-attribute-value” (EAV) design. So I thought it would be a good idea to lay down my opinion in writing.

The idea is not to create a table for each entity in the application. Rather, you store each attribute as a separate entry in an attribute table:

CREATE TABLE objects ( objectid bigint PRIMARY KEY /* other object-level properties */ ); CREATE TABLE attstring ( objectid bigint REFERENCES objects ON DELETE CASCADE NOT NULL, attname text NOT NULL, attval text, PRIMARY KEY (objectid, attname) ); CREATE TABLE attint ( objectid bigint REFERENCES objects ON DELETE CASCADE NOT NULL, attname text NOT NULL, attval integer, PRIMARY KEY (objectid, attname) ); /* more tables for other data types */

The name of the model is derived from the “att...” tables, which have the three columns: “entity ID”, “attribute name” and “value”.

There are several variations of the basic theme, among them:

- omit the

objectstable - add additional tables that define “object types”, so that each type can only have certain attributes

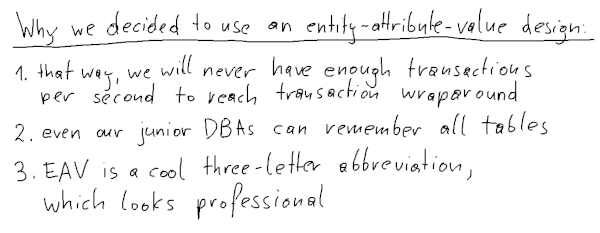

Why would anybody consider an entity-attribute-value design?

The principal argument I hear in support of the EAV design is flexibility. You can create new entity types without having to create a database table. Taken to the extreme, each entity can have different attributes.

I suspect that another reason for people to consider such a data model is that they are more familiar with key-value stores than with relational databases.

Performance considerations of entity-attribute-value design

In my opinion, EAV database design is the worst possible design when it comes to performance. You will never get good database performance with such a data model.

The only use cases where EAV shines are when it is used as a key-value store.

INSERT

Inserting an entity will look like this:

INSERT INTO objects (objectid) VALUES (42);

INSERT INTO attstring (objectid, attname, attval)

VALUES (42, ‘name’, ‘myobject’);

INSERT INTO attint (objectid, attname, attval)

VALUES (42, ‘start’, 100),

(42, ‘end’, 1000);

That means that we insert four rows into three tables and have four index modifications. Also, the three statements will require three client-server round trips. You can save on the round trips by using CTEs to turn that into a single statement, or by using the new pipeline mode of libpq. Still, it will be much more expensive than inserting a single table row.

DELETE

If you use cascading delete, you could do that in a single statement:

DELETE FROM objects WHERE objectid = 42;

Still, you will end up deleting four table rows and modifying four indexes. That’s much more work than deleting a single table row.

UPDATE

A single-column update could actually be faster with the EAV design, because only one small table row is modified:

SET attval = 2000

WHERE objectid = 42 AND attname = ‘end’;

But if you have to modify several columns, you will need to run several UPDATE statements. That will be slower than if you only had to modify a single (albeit bigger) table row.

SELECT

Querying the attributes of an entity requires a join:

SELECT as.attval AS "name", ai1.attval AS "start", ai2.attval AS "end" FROM objects AS o LEFT JOIN attstring AS as USING (objectid) LEFT JOIN attint AS ai1 USING (objectid) LEFT JOIN attint AS ai2 USING (objectid) WHERE objectid = 42 AND as.attname = 'name' AND ai1.attname = 'start' AND ai2.attname = 'end';

Alternatively, you could run three separate queries, one for each attribute. No matter how you do it, it will be less efficient than a single-row SELECT from a single table.

Single-column aggregates

As an example for a query that might be faster with the EAV model, consider a query that aggregates data from a single column:

SELECT sum(attval) AS total FROM othertab JOIN attint USING (objectid) WHERE othertab.col = 'x' AND attint.attname = 'attendants';

With a covering index on attint(objectid, attname) INCLUDE (attval), this could be quite a bit faster than aggregating a column from a wider table.

More complicated queries

After these examples, it is clear that writing more complicated queries will be a pain with the EAV design. Imagine a simple join:

SELECT e1a1.attval AS person_name, e1a2.attval AS person_id, e2a1.attval AS address_street, e2a2.attval AS address_city FROM attint AS e1a2 JOIN attstring AS e1a1 ON e1a2.objectid = e1a1.objectid LEFT JOIN attint AS e2a0 ON e1a2.attval = e2a0.attval LEFT JOIN attstring AS e2a1 ON e2a0.objectid = e2a1.objectid LEFT JOIN attstring AS e2a2 ON e2a0.objectid = e2a2.objectid WHERE e1a1.attname = 'name' AND e1a2.attname = 'persnr' AND e2a0.attname = 'persnr' AND e2a1.attname = 'street' AND e2a2.attname = 'city';

If you think that this query is hard to read, I agree with you. In a normal relational data model, the same operation could look like this:

SELECT person.name AS person_name, persnr AS person_id address.street, address.city FROM person LEFT JOIN address USING (persnr);

You can guess which query will perform better.

But we need an entity-attribute-value design for flexibility!

Relational data models are not famous for their flexibility. After all, that is the drive behind the NoSQL movement. However, there are good ways to deal with variable entities.

Creating tables on the fly

Nothing keeps you from running statements like CREATE TABLE and CREATE INDEX from your application. So if there is a limited number of entity types, and each type has a certain number of attributes, you can easily model that with a traditional relational model.

Certain problems remain:

- A data model that grows on the fly may not end up being well-designed. But that’s not different in the entity-attribute-value design.

- If the application has to create tables, it needs permission to do so. But today, when many applications create their own database tables anyway, few people will worry about that.

Creating tables on the fly will only work well if the set of attributes for each entity is well-defined. If that is not the case, we need a different approach.

Using JSON for a flexible data model

PostgreSQL has extensive JSON support that can be used to model entities with a variable number of attributes.

For that, you model the important and frequently occurring attributes as normal table columns. Then you add an additional column of type jsonb with a GIN index on it. This column contains the “rare attributes” of the entity as key-value pairs.

When using a model like this, you should take care that attributes

- used in joins

- on which you need a database constraint

- that you want to use in a

WHEREcondition with an operator different from “=”

are modeled as regular table columns.

Conclusion

Avoid entity-attribute-value designs in your relational database. EAV causes bad performance, and there are other ways to have a flexible data model in PostgreSQL.

Source: Laurenz Albe @ cybertec-postgresql.com